Brightness (Nits-Candles)

show

WHAT ARE CANDELAS OR NITS?

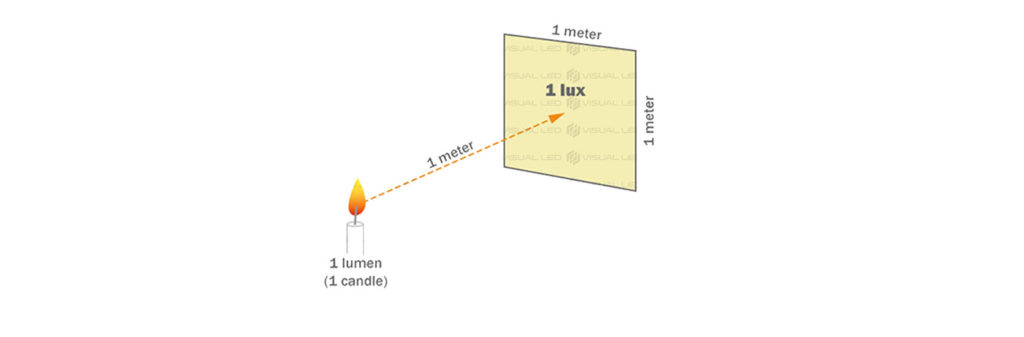

Candelas per square meter (cd / m²) is the International photometric Unit System of luminance and its used as a measure of light emission in an area of 1m2. This unit is used to measure the brightness that a LED screen emits with a white image at its maximum power. This measurement unit is also known as nit.

THE IMPORTANCE OF LUMINANCE OR BRIGHTNESS ON LED SCREENS

A higher brightness level on a LED screen allows it to be used in places exposed to full light without losing the visibility of the content.

Due to this, giant LED screens have become one of the most demanded advertising methods in the outdoor advertisement market.

Let’s see a comparison of the brightness of led advertising screens compared to other systems

WHAT LUMINANCE SHOULD MY LED SCREEN HAVE?

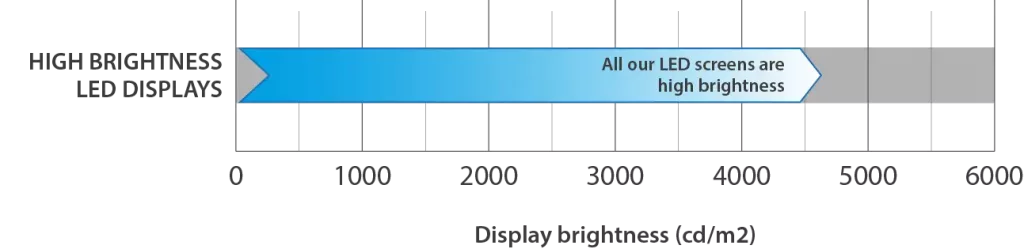

As a rule, for outdoor screens, it is advisable to use a brightness higher than 6,000 nits. (Outdoor screens are screens resistant to rain and exposed on poles or the sides of buildings, that is, they are placed where the sun may hit them directly).

For LED screens in shop windows oriented towards the outside, a luminance between 1,500-3000 nits is advised. But if the sun strikes directly and constantly on the shop’s window throughout the day, it is advisable to have a luminance greater than 5,000 nits.

Regarding the protection against rain, apart from the fact that the price of the screen is usually much higher, the brightness of a screen is independent from its environmental protection.